Ethical issues in computer vision require careful balance between innovation and responsibility. Stakeholders must consider autonomy, safety, and fairness as core concerns. Data practices—collection, labeling, access, and governance—shape outcomes and livelihoods. Transparency in evaluation and accountability mechanisms matter to trust. Policies, standards, and collaborative governance guide responsible deployment. The path forward invites ongoing dialogue among researchers, communities, and organizations to align technical possibilities with human dignity and diverse needs—leaving essential questions unresolved as the field progresses.

What Is at Stake in Computer Vision Ethics

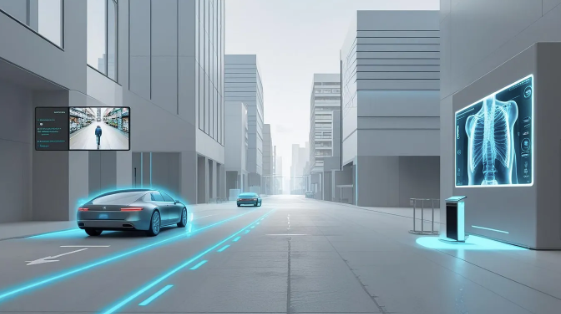

The stakes in computer vision ethics center on how this technology shapes autonomy, safety, and fairness in daily life. Institutions weigh risks and opportunities, balancing innovation with responsibility.

Disentangling biases requires careful evaluation of systems and outcomes. Public trust hinges on transparent processes, informed participation, and addressing consent fatigue while protecting individual dignity and empowering people to shape technology’s trajectory.

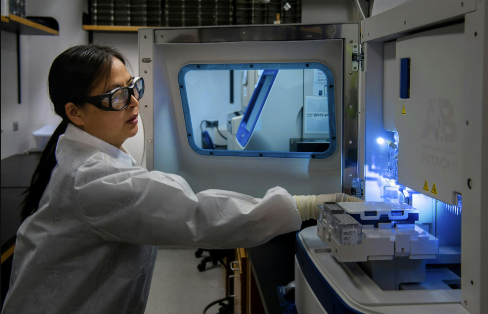

Data Practices That Shape Outcomes

Data practices shape outcomes by determining what data are collected, how they are labeled, and who can access them. This examination emphasizes shared responsibility among researchers, institutions, and communities to uphold transparency and accountability.

Focused on dataset governance and labeling quality, the process seeks robust standards, verifiable provenance, and continuous improvement, ensuring stakeholders understand implications and preserve trust amidst evolving technological ambitions.

Fairness, Privacy, and Accountability in Practice

The piece remains careful and reflective, emphasizing stakeholder concerns about bias mitigation and data minimization.

It highlights transparent evaluation, inclusive feedback, and ongoing governance to preserve autonomy while enabling responsible innovation for diverse users.

Building Trustworthy Vision Systems: Policies, Standards, and Responsibilities

Building trustworthy vision systems requires concrete policies, clear standards, and defined responsibilities that translate ethical considerations into everyday practice. The discussion frames governance as collaborative, balancing innovation with safeguards. It highlights algorithmic bias as a measurable risk and emphasizes implementation accountability across developers, operators, and institutions. Transparent reporting, continuous evaluation, and inclusive stakeholder input are essential to sustain trustworthy deployment and public confidence.

Frequently Asked Questions

How Do We Audit Bias Beyond Accuracy Metrics?

Bias auditing requires examining dataset provenance, representation gaps, and model behavior across groups, deploying counterfactuals and interpretability tools; stakeholders assess fairness trade-offs. It avoids sole accuracy reliance, fostering governance, transparency, and ongoing monitoring beyond metrics.

What Rights Do Individuals Have Over Ai-Generated Inferences?

Calmly, individuals retain privacy rights over AI inferences, and consent mechanisms should govern use. The circle of influence narrows when data are inferred, inviting careful stewardship, transparent notices, and stakeholder dialogue to respect autonomy while enabling innovation.

Can Vision Systems Be Made Explainable to Non-Experts?

Yes, vision systems can be made explainable to non-experts through Explainable interfaces and Human centered visual explanations, enabling understanding while preserving autonomy. The approach remains careful, reflective, stakeholder-focused, supporting an audience that desires freedom and informed choice.

See also: Dynamic Tech Platform 949032045 Web Engine

How Should Liability Be Allocated for Misidentifications?

Balance favors shared responsibility: misidentification liability and risk allocation should reflect system design, deployment context, and accountability pathways, ensuring transparent fault lines among developers, users, and operators, with safeguards for freedom and stakeholder voices.

What Are the Long-Term Societal Impacts of Surveillance Cameras?

Surveillance cameras shape long-term social norms, potentially normalizing oversight and silencing dissent. Stakeholders weigh privacy creep and data retention against security; balanced governance could preserve freedoms while enabling accountability and proportionate protections for vulnerable communities.

Conclusion

Ethical computer vision rests on careful governance that bridges innovation with public trust. Stakeholders must persistently examine data practices, from collection to labeling, to curb bias and protect privacy. A notable statistic—bias amplification in facial recognition can occur in up to X% of undiverse training sets—highlights the urgency of representative data and transparent evaluation. By aligning policies, standards, and accountability, institutions can foster responsible deployment, safeguard dignity, and ensure systems serve broad and varied communities.